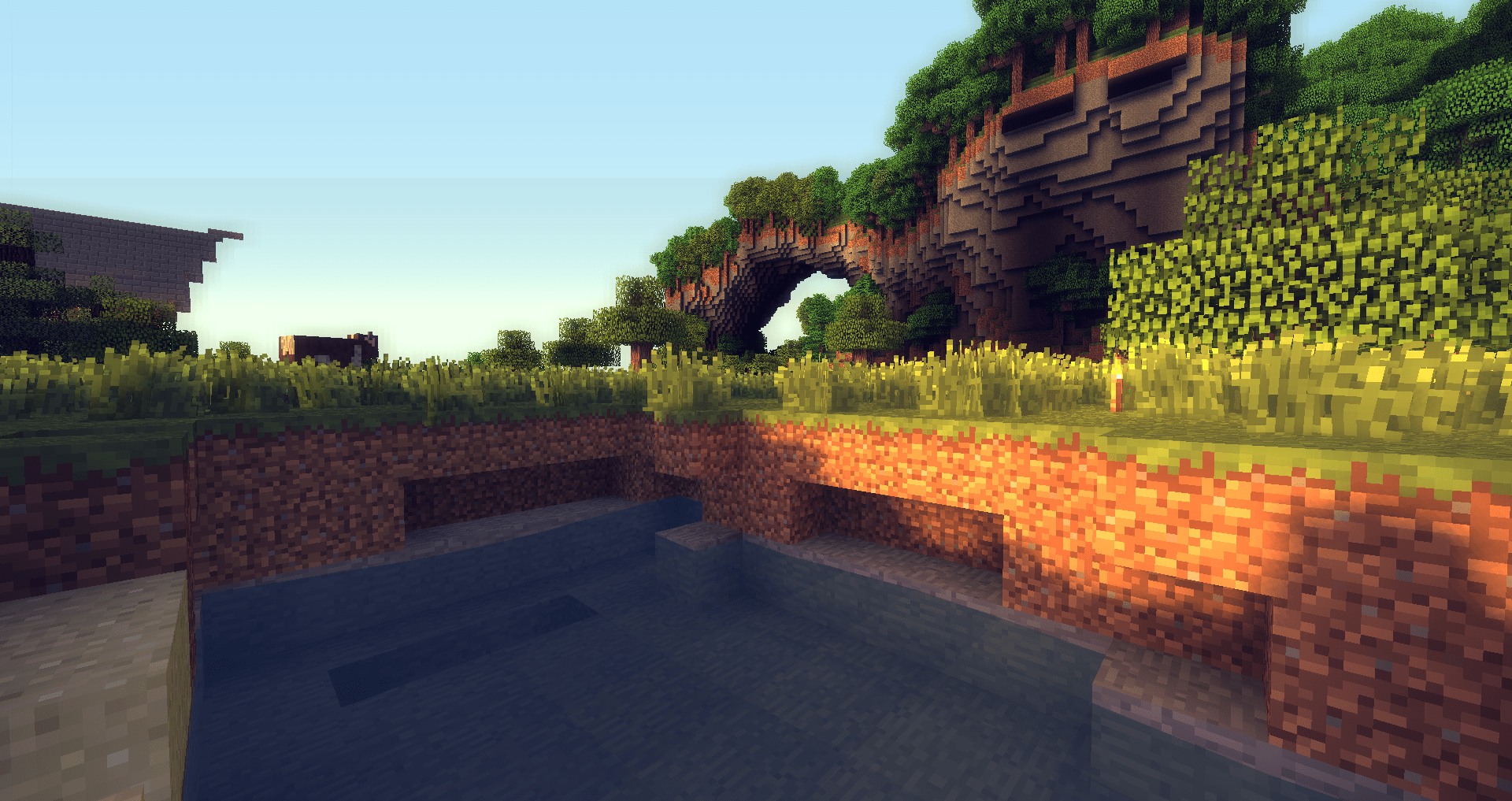

Exile: Voxel Rendering Pipeline

No, Exile is not technically a “voxel” engine. A real voxel engine unifies objects, textures, and more into colored voxel data, rendering them via raymarching/marching cubes/dual contouring/etc., and can target realism. Instead, Exile is a “voxel” engine in that it’s a traditional 3D engine that happens to focus on representing and drawing textured cubes (like Minecraft and related games). Some have worked around this linguistic confusion by christening the technique “Swedish cubes,” but for the purposes of this post, please assume that voxels imply textured cubes.

Voxels

Representing a game world with voxels provides several distinct advantages:

Interactivity: Voxels provide an obvious way for the player to build, edit, and destroy the world however they wish.

Systems: Having a natural world grid allows procedural generation, NPCs, logistic systems, and the like to seamlessly integrate into the environment.

Performance: Many optimizations (lighting, meshing, AO, culling, pathing, etc.) are available when working with voxel data, resulting in good performance scaling.

Aesthetics: Subjectively, voxel worlds can look better from farther distances than traditional mesh-based worlds, all while being more compact.

Of course, voxels are not without some disadvantages:

- Not for realism, and can look unnatural when mixed with non-voxel elements.

- No natural LOD algorithm (though there is for marching cubes).

- Likely others I haven’t run into.

So voxels are cool, but hasn’t this already been done by Minecraft, Minetest, Creativerse, FortressCraft, etc? That’s not even counting the many games that render voxel worlds with a non-cube asethetic. Well, maybe it has, but I believe there’s work to be done in integrating the technical advancements of a variety of techniques, from user interaction and world generation to new representations and graphics technology.

One of the motivating factors for this project was frustration with the state of modded Minecraft: as complex and interesting as the game can be, it suffers from countless technical problems, performance bottlenecks, and compatibility issues, all of which could be solved under a new platform built with extensibility and performance as top priorities. This is my aspiration for Exile, but the project has also served as a learning experience: deciding to start from absolute scratch has led me to learn about the interconnected workings of everything in a 3D engine, from platform services to data structures to debug interfaces and voxel worlds.

World

In Exile, the world is abstractly represented as an infinite (well, UINT32_MAX x UINT32_MAX x 511) field of blocks. Block queries can be made at any position in the world. Of course, there’s not enough memory on the planet to store that much information, so the world is sparsely populated by 31 x 31 block chunks. When a chunk of the world is needed, for example, because the player is near enough to render it, it is either retrieved from the world (a hash map) or generated and added on-demand.

The following describes Exile’s general pipeline for going from a flat chunk of voxel data to rendering an output: more detail on how the world is generated, persisted, rendered, and edited will be published in a future post.

Techniques

In developing Exile, I explored several techniques for rendering voxel data, and have mostly settled on a hybrid mesh-geometry solution.

Instancing

This technique is the simplest and most obvious: instance a single cube mesh for each block you want to render. This works surprisingly well in the “worst” case, a uniform checkerboard of blocks (meaning all faces must be rendered). However, given the massive overdraw in common cases and lack of flexibility, this technique is not very useful in the end.

Geometry shaders

Using geometry shaders to generate raster data encompasses a variety of options, including generating entire blocks (with up to three faces visible) and generating triangles based on face data. These techniques tend to be the most space-efficient, but suffer in complexity and geometry shader performance.

Meshing

Meshing also refers to several techniques. The basic idea is to generate a traditional 3D mesh from voxel data. Rendering static meshes is just about the fastest thing a GPU can do, so meshing tends to be the most performant approach—but can require unacceptable amounts of GPU memory. However, memory limitations may be worked around by using fixed-precision attributes. For example, if all vertices are at integer coodinates within a chunk, the coordinates only need enough bits to cover the size of the chunk—certainly not a 12-byte

vec3. Further, this approach can be combined with geometry shaders to render directly from an optimized mesh of per-face data rather than per-vertex data.

I found the approach with the highest absolute performance to be meshing, settling on a meshing pipeline that works with compact quads: generate an optimized face mesh for each chunk, then feed the mesh faces (each represented by four compact vertices) through an instanced vertex shader that unpacks the vertices, assembles them into a quad, and passes them along to the fragment shader.

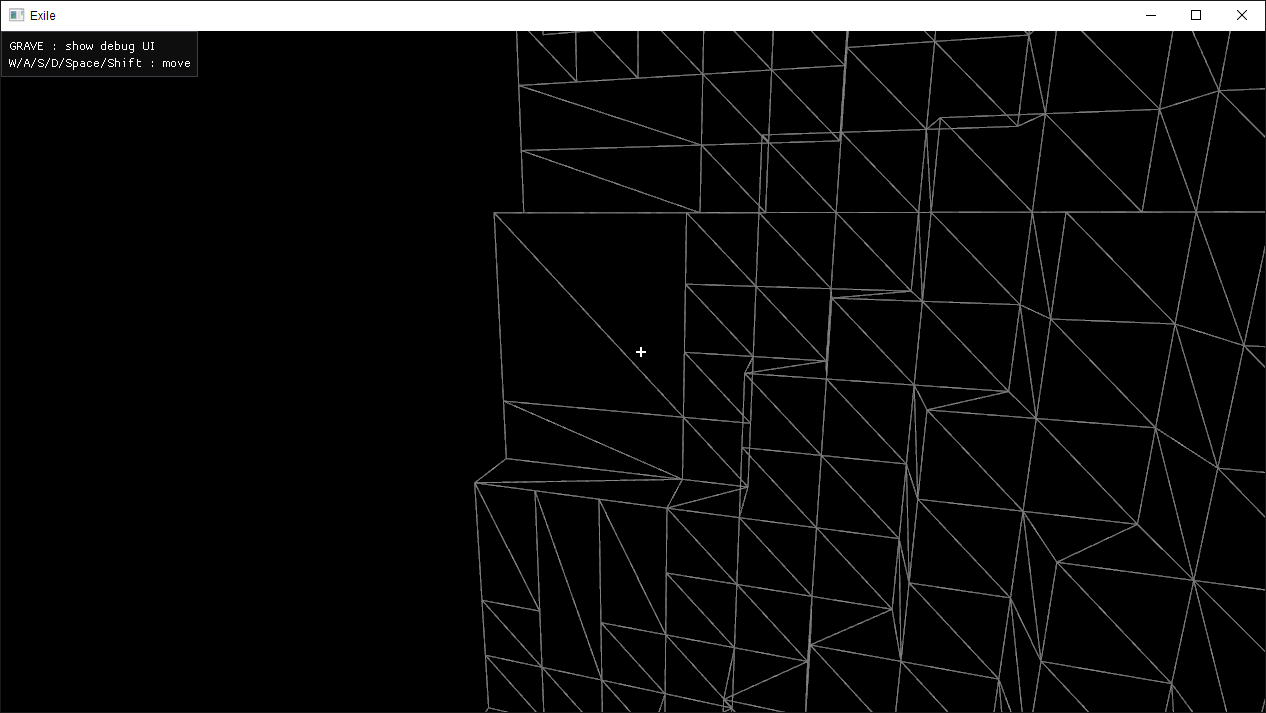

Meshing

The first step in the voxel rendering pipeline is creating an optimized, render-able mesh from each chunk. This means converting from flat block data to a list of render-able faces. Exile uses a greedy meshing algorithm to both cull invisible faces and combine identical faces into larger blocks, greatly reducing the overall number of quads. Greedy meshing provides a good trade-off between creating compact meshes and latency in (re)generation. I highly recommend reading this article for an exploration of the algorithm.

Result of Greedy Meshing

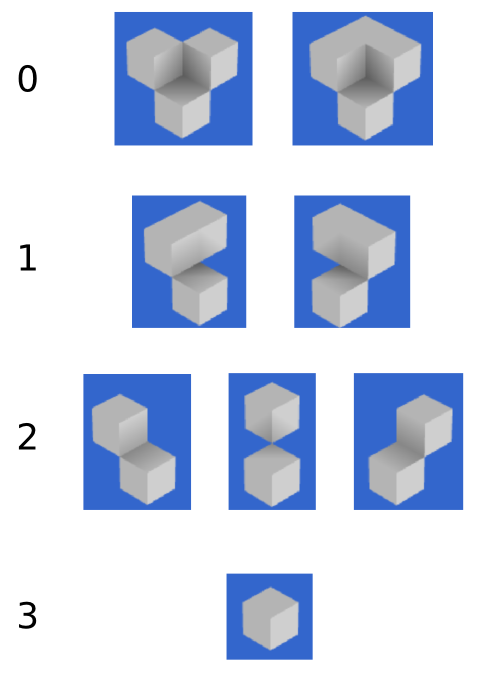

Ambient Occlusion

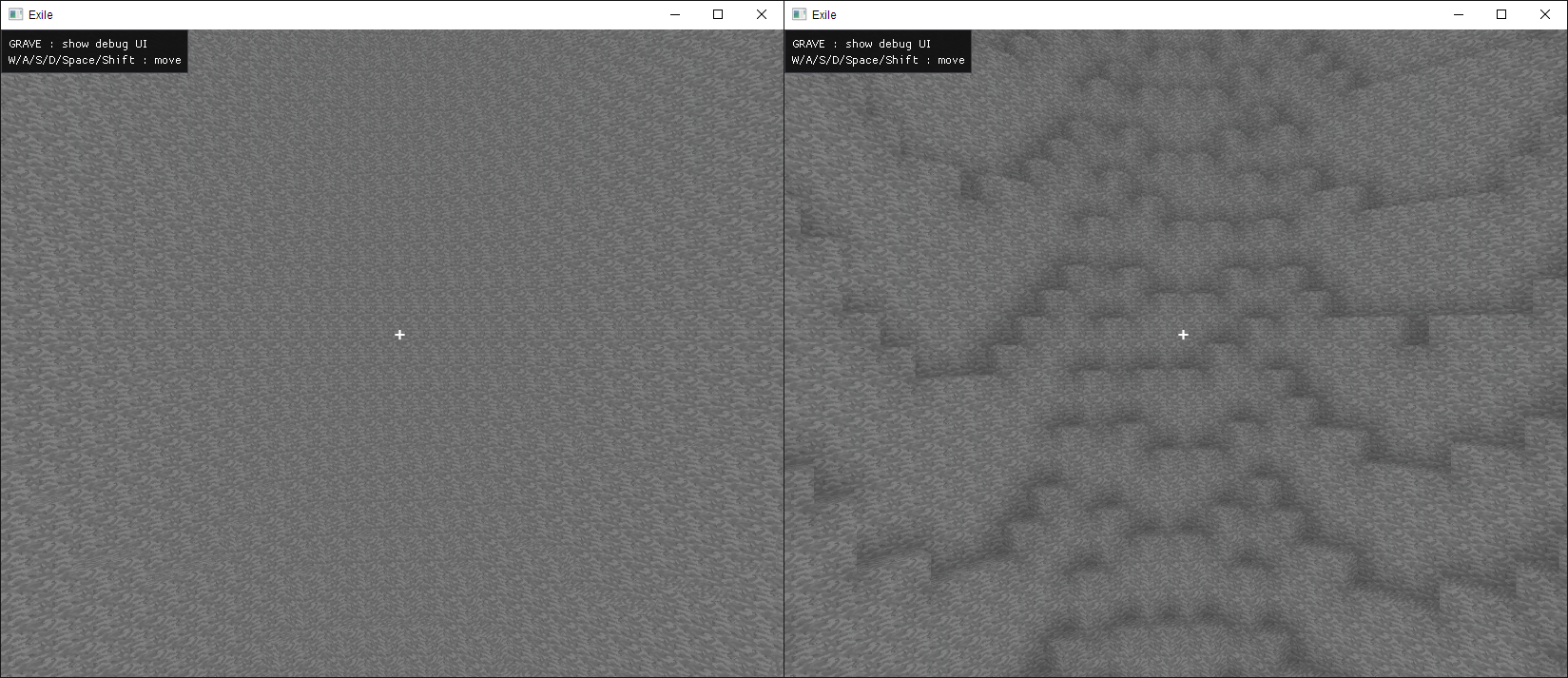

Even without basic lighting, ambient occlusion can provide a reasonable level of definition in corners and facets. Ambient occlusion is very lightweight to implement in a static voxel world: on mesh generation, an occlusion value can be calculated at each vertex and baked into the mesh for the renderer to refer to.

There are four levels of occlusion possible for any vertex in the world, as shown here:

Credit 0fps.net

Notice that the occlusion value of the vertex is only dependent on the opacity of the three upper adjacent blocks (if all are filled, occlusion is always 0). Hence, for any vertex in the world, one can find which of the four blocks above the vertex is air, then calculate occlusion based on the other three (two sides and the corner). If both sides are filled, the point is maximally occluded, but otherwise, the occlusion is simply increased for each filled block.

if(side0 && side1) {

return 0;

}

return 3 - side0 - side1 - corner;

Finally, once the 0-3 occlusion value is calculated for each vertex, the fragment shader must use this value to blend and darken the result. To do so, the shader can use the 0-3 value as an index into a uniform occlusion curve, multiplying the fragment color by the specified occlusion factor. In Exile, the default curve is 0.75, 0.825, 0.9, 1.0.

However, simply assigning each vertex an occlusion value and interpolating between them will not produce the correct results over a quad: by default, the GPU will interpolate vertex attributes per triangle in barycentric coordinates. To get around this, we must specify the occlusion values of all four vertices in a face for each vertex. With this information, the fragment shader can interpolate the occlusion bi-linearly based on its texture u/v coordinates, correctly blending occlusion across the quad.

For more detail, refer to this article. (Implementation)

With and without ambient occlusion (no other lighting)

Vertex Format

Each face output by the meshing system contains four vertices (one for each corner) represented as uvec2s (eight bytes).

00000000000000000000000000000000 00000000000000000000000000000000

|------||------||------||------| |----------||----------||------|

x z v u y id ao

The first uint contains the x and z positions of the vertex within the chunk, as well as the u and v coordinates for the texture at that vertex. Each value is one byte, hence ranges from 0-255. This is why chunks in Exile are 31 x 31: all x, z, u, and v positions are divided by eight before projection into the world, so we have a range of 0-31.785 blocks with the option to place a vertex anywhere on a 1/8th block grid.

Unfortunately, because faces are represented by four vertices, one at each corner, 32 x 32 chunks would require vertices with position 32 (256), which is out of range. This problem can be worked around by representing faces as a single vertex and building out the extent of the face in the geometry shader (which I implemented), but the performance/compactness trade-off was not desirable.

Further, you may question why exactly we need to use u/v texture coordinates at all: if everything is just a unit quad, aren’t all vertices at a u/v endpoint? This is correct, but remember that the greedy meshing system produces quads that can cover up to an entire chunk—31 x 31—necessitating a way to signify how many times the single-block texture should be repeated across the combined face.

The second uint contains the vertex’s y position (again multiplied by eight), texture ID, and ambient occlusion values. The ID value serves as an index into a texture array containing each block texture—the sample coordinate is calculated via (u / 8, v / 8, t). Both of these values are 12 bits wide, ranging from 0-4096. Hence, chunks are 511 blocks tall, and the texture array can hold 4096 block textures. (Texture arrays are typically limited to less than 4096 slots, but the highest bits may be used for swapping array ‘banks.’)

Finally, the occlusion value is actually four two-bit attributes, representing the 0-3 occlusion values for each vertex in the associated face. Because all four values are represented in each vertex, the fragment shader can use its u/v coordinates to bi-linearly interpolate the occlusion over the quad.

Rendering

Once a list of faces, each being four compact vertices, has been generated by the meshing system, it’s time to pass them to the GPU pipeline. In Exile, they are submitted in an unconventional way: because we want each quad, that is, each sequence of four vertices, to be rendered as its own triangle strip, Exile submits an instanced “quad” to OpenGL for each face. To be specific, Exile draws a four-element triangle strip with an instance for each face. The actual vertex data is gathered from instance-specific attributes describing each vertex of the face. Because there are only four vertices in each (semantic, not actual) draw call, gl_VertexID may be used to select the current vertex data from the instance attributes. Finally, the vertex shader unpacks the vertex data into floating-point formats, calculates the face normal vector, and submits the data to the fragment shader.

#version 330 core

layout (location = 0) in uvec4 v0;

layout (location = 1) in uvec4 v1;

uniform vec4 ao_curve;

uniform float units_per_voxel; // 8.0

uniform mat4 mvp;

const uint x_mask = 0xff000000u;

const uint z_mask = 0x00ff0000u;

const uint u_mask = 0x0000ff00u;

const uint v_mask = 0x000000ffu;

const uint y_mask = 0xfff00000u;

const uint t_mask = 0x000fff00u;

const uint ao0_mask = 0x000000c0u;

const uint ao1_mask = 0x00000030u;

const uint ao2_mask = 0x0000000cu;

const uint ao3_mask = 0x00000003u;

flat out uint f_t;

flat out vec4 f_ao;

out vec2 f_uv;

out vec3 f_n;

struct vert {

vec3 pos;

vec2 uv;

vec4 ao;

uint t;

};

vec3 unpack_pos(uvec2 i) {

return vec3((i.x & x_mask) >> 24,

(i.y & y_mask) >> 20,

(i.x & z_mask) >> 16) / units_per_voxel;

}

vert unpack(uvec2 i) {

vert o;

o.pos = vec3((i.x & x_mask) >> 24,

(i.y & y_mask) >> 20,

(i.x & z_mask) >> 16) / units_per_voxel;

o.uv = vec2((i.x & u_mask) >> 8,

i.x & v_mask) / units_per_voxel;

o.t = (i.y & t_mask) >> 8;

o.ao[0] = ao_curve[(i.y & ao0_mask) >> 6];

o.ao[1] = ao_curve[(i.y & ao1_mask) >> 4];

o.ao[2] = ao_curve[(i.y & ao2_mask) >> 2];

o.ao[3] = ao_curve[(i.y & ao3_mask)];

return o;

}

void main() {

uvec2 verts[4] = uvec2[](v0.xy, v0.zw, v1.xy, v1.zw);

vert v = unpack(verts[gl_VertexID]);

vec3 v1 = unpack_pos(verts[0]);

vec3 v2 = unpack_pos(verts[1]);

vec3 v3 = unpack_pos(verts[2]);

gl_Position = mvp * vec4(v.pos, 1.0);

f_n = cross(v2 - v1, v3 - v1);

f_uv = v.uv;

f_ao = v.ao;

f_t = v.t;

}

The fragment shader then renders the rasterized results using the u/v/t texture coordinates and ambient occlusion values.